|

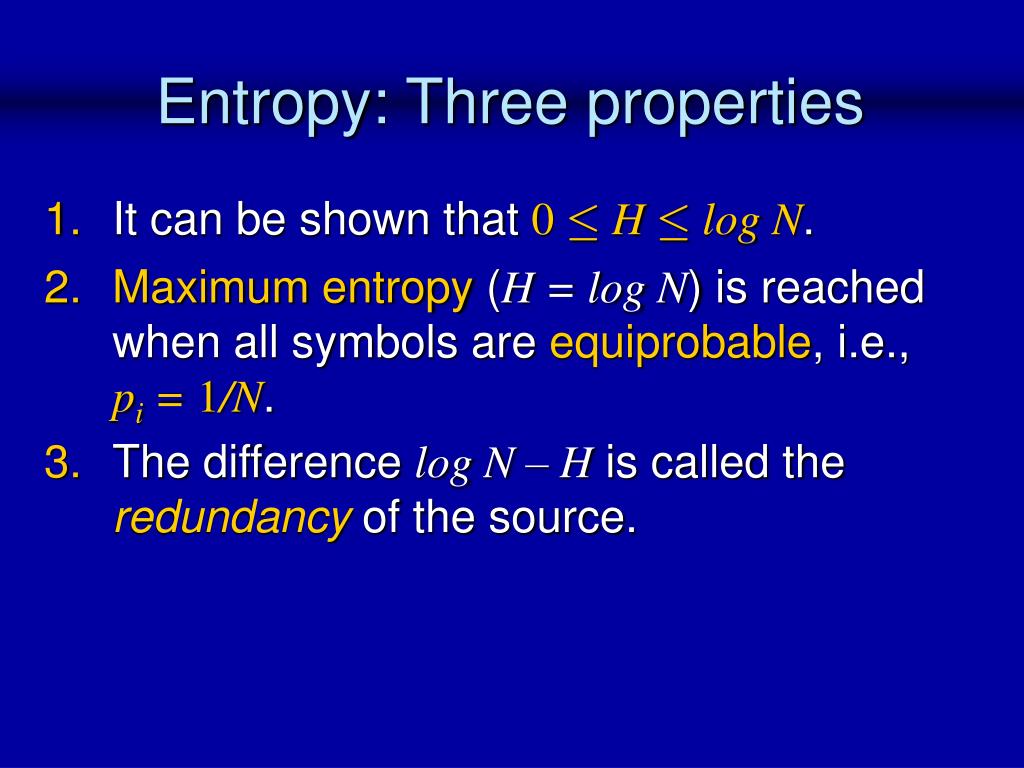

9/1/2023 0 Comments Entropy information theory In machine learning, entropy tells us how difficult it is to predict an event. If one class gains quantitative dominance, the probability of such a class increases equally and the entropy state decreases. The entropy state is maximum when two classes reach 1.00 and these classes occur within a superset with identical frequency. Ultimately, there is a whole entropy algebra where one can calculate back and forth between marginal, conditional and joint entropy states.Įntropy in machine learning is the most commonly used measure of impurity in all of computer science.The joint entropy makes statements about how many bits are needed to correctly encode both random variables.Another role is played by conditional entropy, which clarifies how much entropy remains in one random variable given knowledge of another random variable.Cross-entropy minimisation is used as a method of model optimisation.The entropy change (information gain) is used as a criterion in feature engineering.The intrinsic measure of difficulty and quality is applied in machine learning. The Kullback-Leibler divergence is a certain distance measure between two different models.

The cross entropy can be understood as a measure that originates from the field of information theory and is based on entropy states. The cross entropy is usually used in the Machine learning used as a loss function. It is not about a single blunder admitted by a single person (e.g. It is the story of the Greatest Blunder Ever in the History of Science. It calculates the total entropy between the distributions. This article is about the profound misuses, misunderstanding, misinterpretations and misapplications of entropy, the Second Law of Thermodynamics and Information Theory.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed